Autonomous vehicles are moving rapidly into commercial mobility, driven by advances in Artificial Intelligence (AI), sensors, connectivity, and demand for safer, more efficient transportation. Waymo, Alphabet’s autonomous-driving company, reported more than 250,000 autonomous trips per week across Phoenix, San Francisco, Los Angeles, and Austin in 2025, and later said it had begun serving over one million fully autonomous rides every month.

But as the AV industry scales, safety is becoming the real enterprise test. The enterprise challenge is no longer just building the AV technology ; it is proving that it can operate safely, consistently, and transparently across unpredictable environments.

Reach out to discuss this topic in depth.

Recent incidents show how quickly safety gaps can become business, regulatory, and trust issues. In 2026, the National Highway Traffic Safety Administration (NHTSA) escalated an investigation covering about 3.2 million vehicles over concerns that an automated driving feature may fail to detect degraded visibility such as glare, dust, or airborne obstructions, or warn drivers in time.

Incidents like these show the criticality of annotation and safety training for AV. Not the basic kind, but safety-grade annotation that captures context, intent, uncertainty, and edge cases across multimodal sensor data. In complex real-world scenarios, annotation becomes the operational backbone of safety validation, incident reconstruction, and continuous model improvement.

Why is annotation gaining more relevance?

The need for annotation is rising as AVs move to real-world deployment. While a pilot can be limited to a known operating zone, commercial deployment is different with dense cities, highways, suburbs, and mixed-traffic roads where infrastructure quality, visibility, driving behavior, and road conditions vary widely.

The following shifts are making annotation a safety-critical capability:

- Multimodal sensor data needs one reliable ground truth: AVs rely on cameras, Light Detection and Ranging (LiDAR), radar, maps, Global Positioning System (GPS), telemetry, and cabin cameras, each capturing a different layer of the driving environment. Annotation helps align these inputs into a single, consistent view so the system can understand what it sees, where objects are, how they are moving, and how the vehicle responded

- Edge cases now define safety performance: AVs must learn from rare but safety-critical scenarios. This includes scenarios such as jaywalking pedestrians, sudden braking to emergency vehicles, blocked lanes, and unusual road layouts. Annotation helps identify, classify, and structure these events so they can be reused for training, simulation, validation, and retraining

- Context is harder than detection: A pedestrian near a curb, a slowing vehicle, or a construction-shifted lane can each imply multiple risks. Annotation helps capture the context behind the object – motion, intent, relationships, occlusions, right-of-way, uncertainty, and risk signals – so the AV can understand not just what is present, but what may happen next

Regulation is further reinforcing this shift. NHTSA’s crash-reporting requirements for ADS and SAE Level 2 ADAS, along with Europe’s type-approval rules (Regulation 2022/1426) for automated driving systems, are pushing AV companies beyond performance claims toward traceable evidence of system behavior, missed detections, incident review, and model improvement.

Annotation creates the evidence layer behind AV safety. It shows what the system saw, what it missed, how it interpreted the scene, and what data should feed back into testing and retraining. Without high-quality annotation, AV companies cannot prove safety, learn from incidents, or scale with trust.

The AV safety loop: train, test, explain, improve

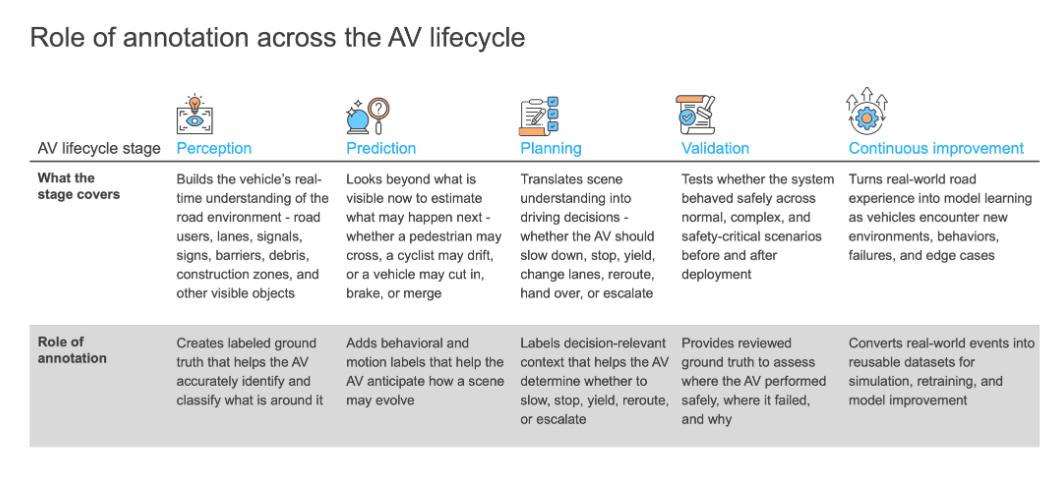

As AV systems encounter more complex and rare scenarios and regulatory scrutiny, annotation becomes the mechanism through which enterprises train, test, explain, and improve safety performance. Exhibit 1 highlights how annotation supports the AV lifecycle across perception, prediction, planning, validation, and continuous improvement.

What the AV annotation stack must cover

What the AV annotation stack must cover

The annotation stack for AVs is broad and multimodal. It includes multiple layers, from what the vehicle sees outside, to how it understands space, scenarios, cabin activity, and privacy risk. Exhibit 2 outlines the key annotation layers required across the AV stack.

The way forward: annotation as a strategic safety capability

The way forward: annotation as a strategic safety capability

As AVs move into more complex real-world environments, enterprise expectations from annotation will shift from labelling efficiency to safety-grade data readiness. Enterprises will need annotation measures that do more than produce datasets; they must help prove system performance, uncover model blind spots, support regulatory evidence, and continuously improve AV behavior after deployment.

Enterprises will have to continuously:

- Build a unified ground-truth strategy across camera, LiDAR, radar, maps, telemetry, simulation, and cabin data so models learn from one consistent view of the driving environment

- Redesign annotation taxonomies around safety context by capturing motion, intent, occlusion, right-of-way, risk escalation, and scenario progression, not just object categories

- Create dedicated edge-case pipelines to identify rare but safety-critical scenarios such as blocked lanes, emergency vehicles, poor visibility, jaywalking, and unusual road layouts

- Connect incident review with model improvement so disengagements, near misses, false positives, false negatives, and crashes feed directly into validation, simulation, and retraining datasets

- Embed privacy and auditability into annotation workflows by protecting faces, license plates, cabin data, location-sensitive information, and maintaining traceable records for regulatory readiness

- Make annotation a continuous operating capability that evolves with new roads, weather conditions, traffic behaviors, vehicle platforms, and regulatory expectations

The next phase of autonomous mobility will be determined by which companies can build and maintain that layer at scale with accuracy, speed, privacy safeguards, and continuous feedback. That’s where annotation shifts from being a data task to being a strategic capability.

If you found this blog interesting, check out, When AI Gets a Body: How Physical AI Moves Trust and Safety from Content Moderation to Real-Time Action Governance – Everest Group Research Portal, which explores how AI systems are moving from digital decision-making to real-world action, and why enterprises need stronger governance, safety, and accountability models.

To take the conversation forward, please reach out to Dhruv Khosla ([email protected]) and Tushar Pathela ([email protected]).