In this article, you will learn how to apply a structured decision tree to choose the right agentic design pattern for any AI system you are building.

Topics we will cover include:

- Why pattern selection is a critical design decision, and what assumptions underlie each major agentic design pattern.

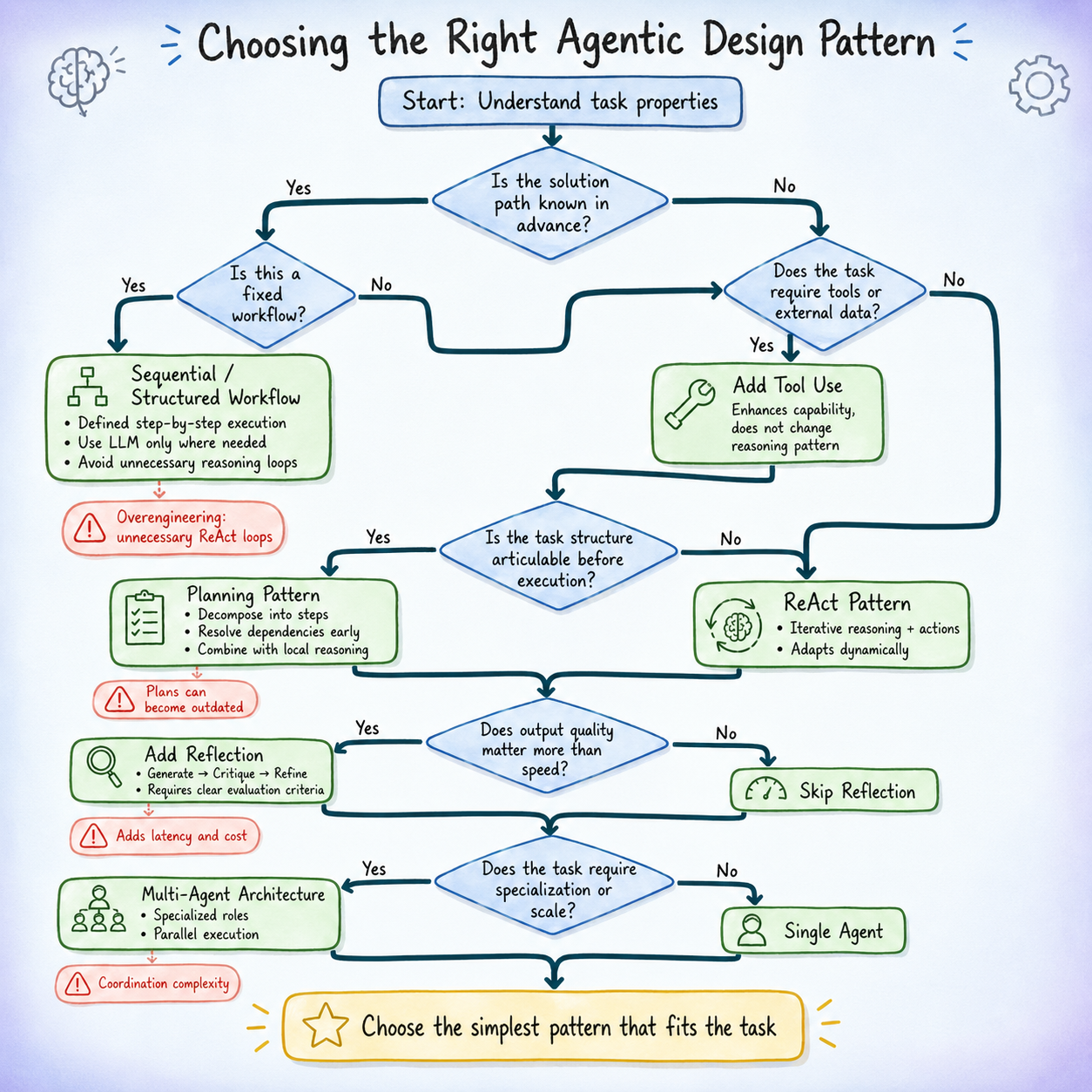

- A five-question decision tree that maps concrete task properties to the most appropriate starting pattern.

- Common failure signals for each pattern and the targeted fixes that address them.

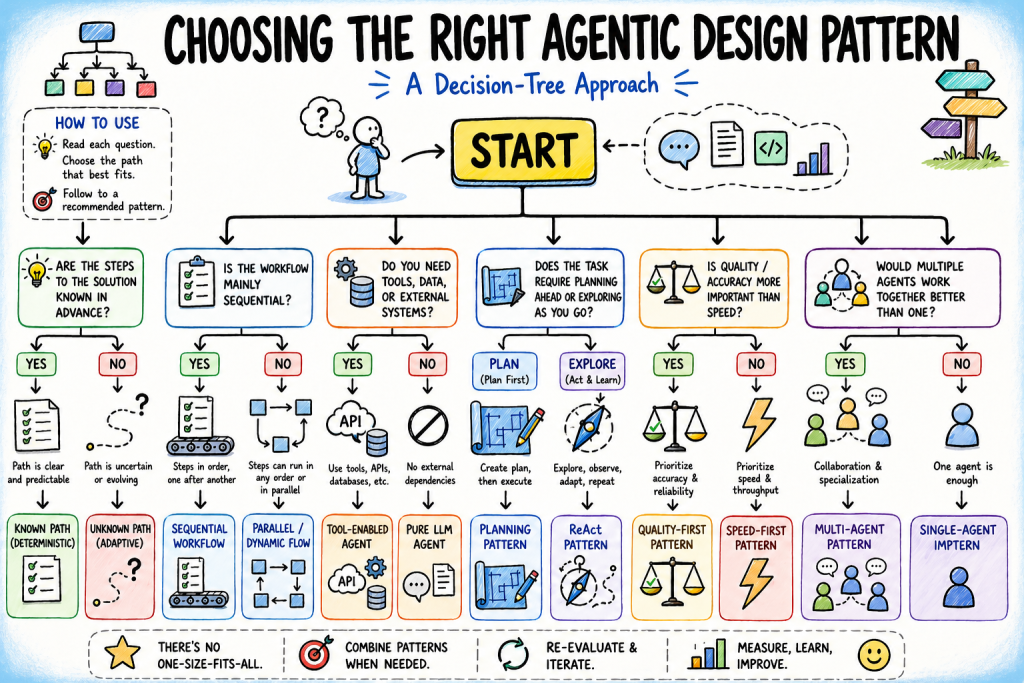

Choosing the Right Agentic Design Pattern: A Decision Tree Approach (click to enlarge)

Introduction

Most agentic architecture mistakes start with a simple misread of the problem. Developers often pick a pattern based on what looks impressive or familiar, not what the task actually needs. A multi-agent system from a talk can look like the “right” answer, so they spend weeks building orchestration for something a single well-prompted agent with a couple of tools could handle in a day. Or they go the other direction, keep things too simple, and only discover in production that the system can’t adapt or scale, forcing a redesign under pressure.

Pattern selection is where the real design work happens. The agentic design patterns themselves are well documented. What is less documented is the decision logic for choosing between them. That logic is what this article is about.

The approach here is a decision tree: a series of questions about your task, your constraints, and your acceptable trade-offs that leads you to the right starting pattern. The tree doesn’t produce a final answer; agent architectures evolve as feedback accumulates. But it gives you a principled starting point, and it makes the reasoning behind your choice clear enough to revisit when things change.

Why Is Agentic Design Pattern Selection Important?

Before working through the decision tree, it is important to clearly define what is at stake when selecting a design pattern.

Each agentic design pattern is based on specific assumptions about the structure and demands of a task. Here are some of them:

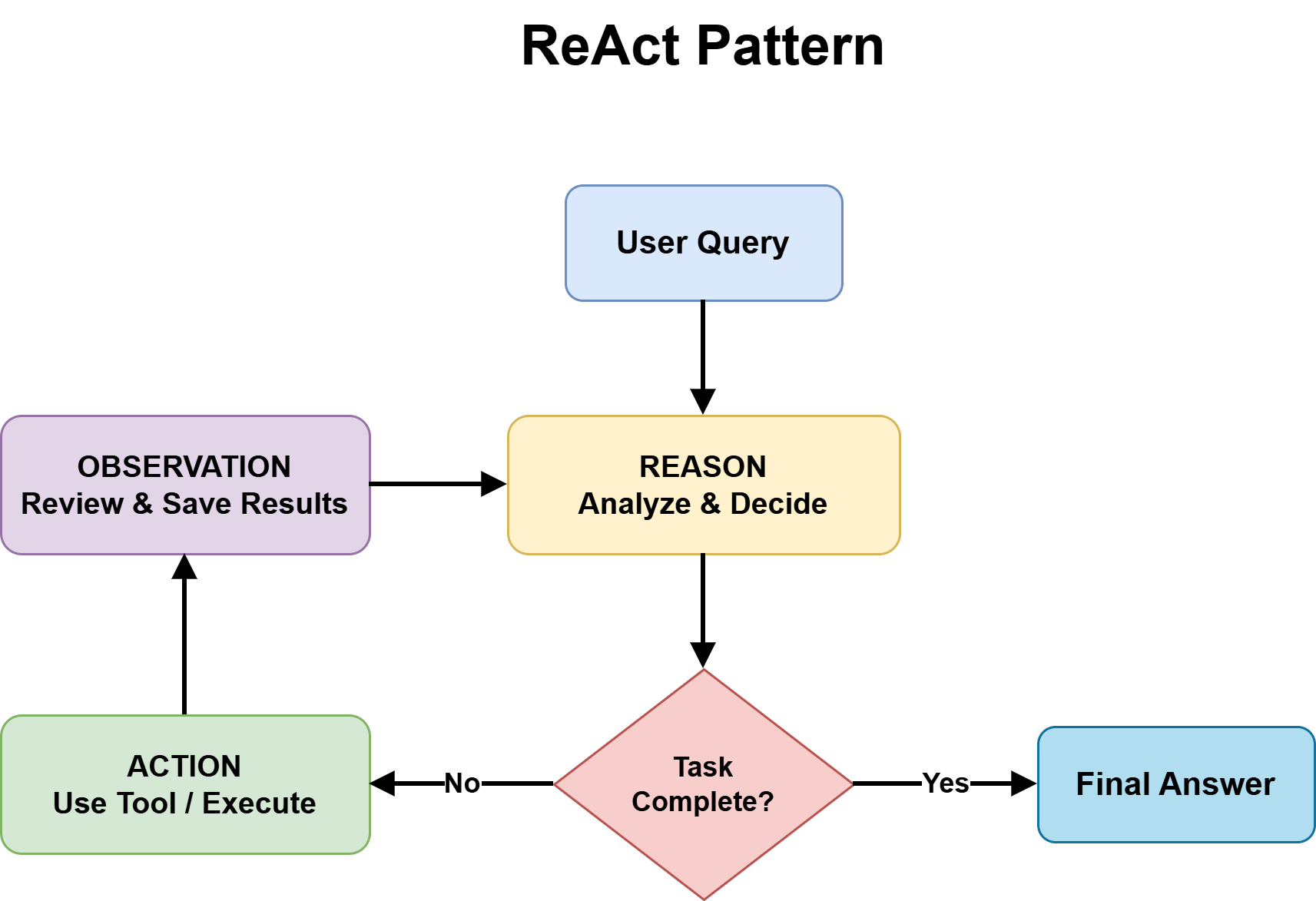

- ReAct pattern treats the next best action as not fully knowable in advance, and relies on combining reasoning with tool use at each step to improve decisions.

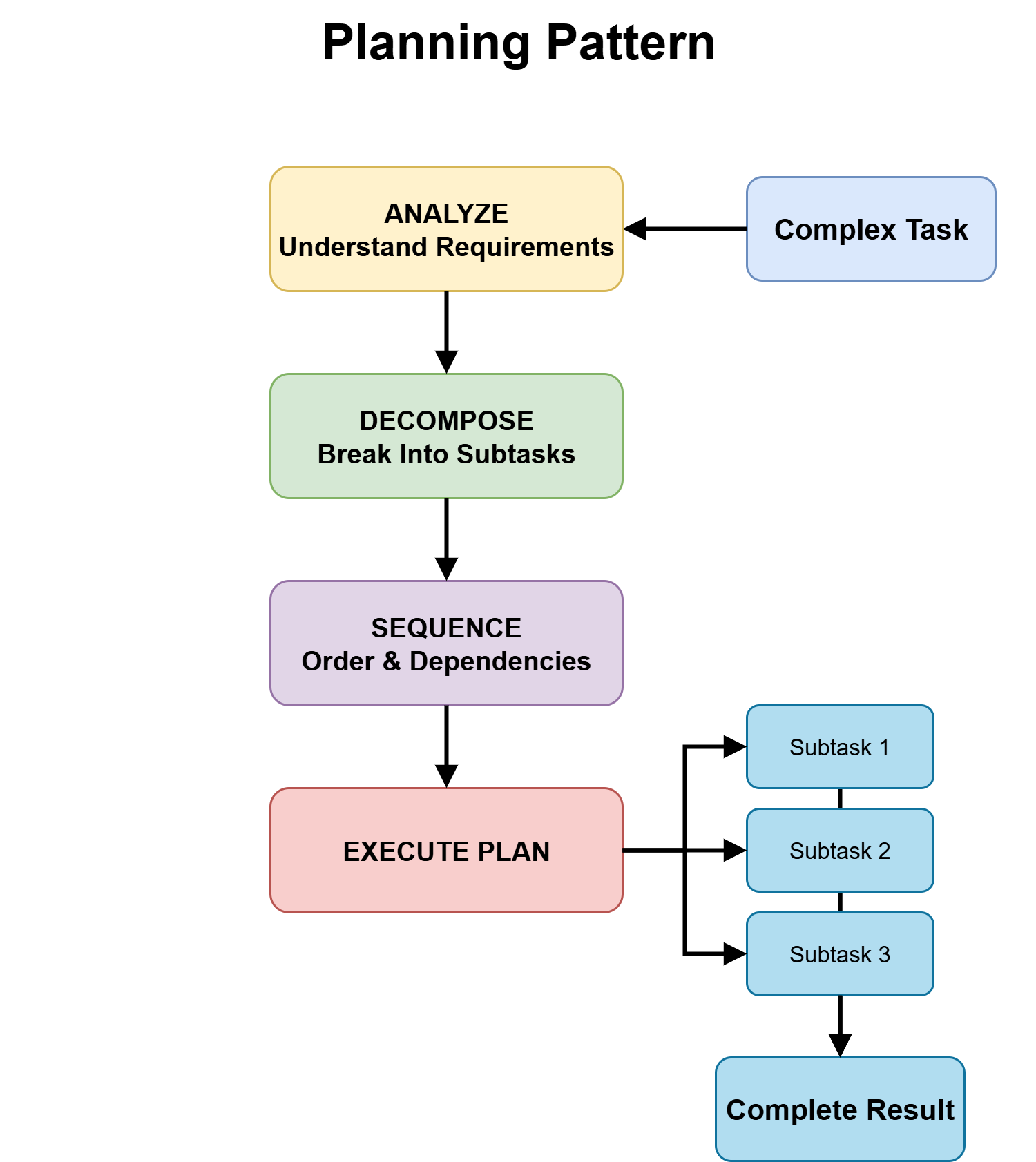

- Planning is based on the idea that the major structure of the task can be identified upfront, and that defining an execution roadmap improves downstream reliability.

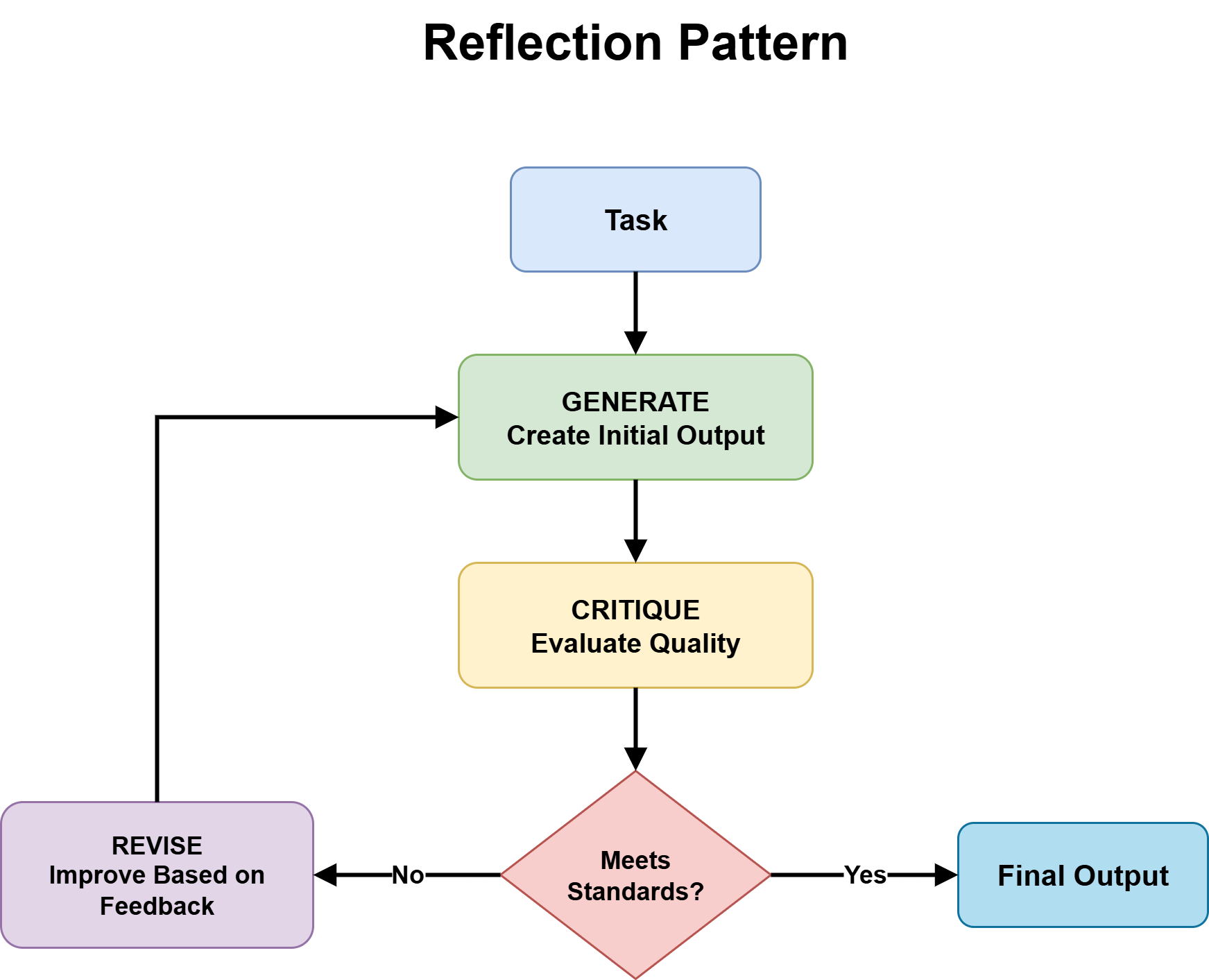

- Reflection pattern is grounded in the expectation that first-pass outputs are often incomplete or flawed, and that iterative self-critique and refinement improve final quality enough to justify the added cost.

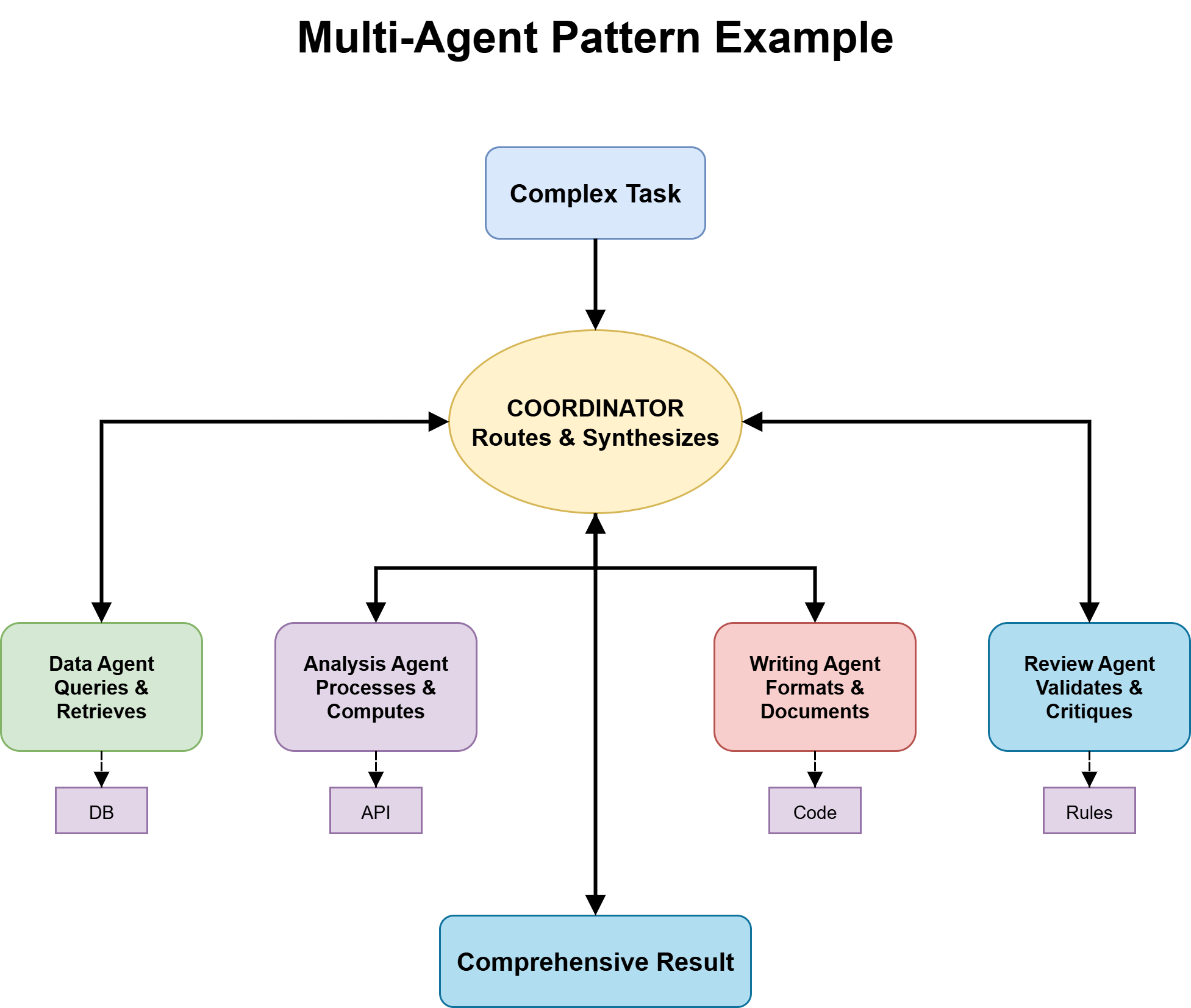

- Multi-agent approaches operate on the belief that the task benefits from decomposition into specialized roles, where parallel or modular execution outweighs the overhead of coordination.

When these assumptions match the task, the pattern adds real value. When they don’t, it adds overhead without improving results. For instance, planning can become rigid when task structure only emerges during execution, reflection can waste resources on simple queries, and multi-agent setups can add unnecessary complexity for problems a single agent can solve.

The decision tree below helps guide deliberate pattern selection. Each branch reflects a key task property that determines which assumptions actually hold.

Further reading on agentic design patterns: The Roadmap to Mastering Agentic AI Design Patterns

A Decision Tree for Choosing the Right Agentic Design Pattern

The tree has five branching questions, each one narrowing the pattern space based on a concrete property of the task at hand. Work through them in order.

Question 1: Is the Solution Path Known in Advance?

This question separates fixed workflows from adaptive ones.

A known solution path means the full step-by-step process can be defined before execution. For example:

- Invoice processing: extract fields → validate → store → confirm

- Employee onboarding: create accounts → send welcome email → assign manager → schedule orientation

These are predictable workflows where the same steps apply every time.

An unknown solution path means each step depends on previous outputs. Research tasks that follow new evidence, customer support that branches based on user input, or debugging that shifts hypotheses based on earlier results cannot be fully planned in advance.

If the path is known → go to Question 2a. If unknown → go to Question 2b.

Question 2a: Is This a Fixed Workflow?

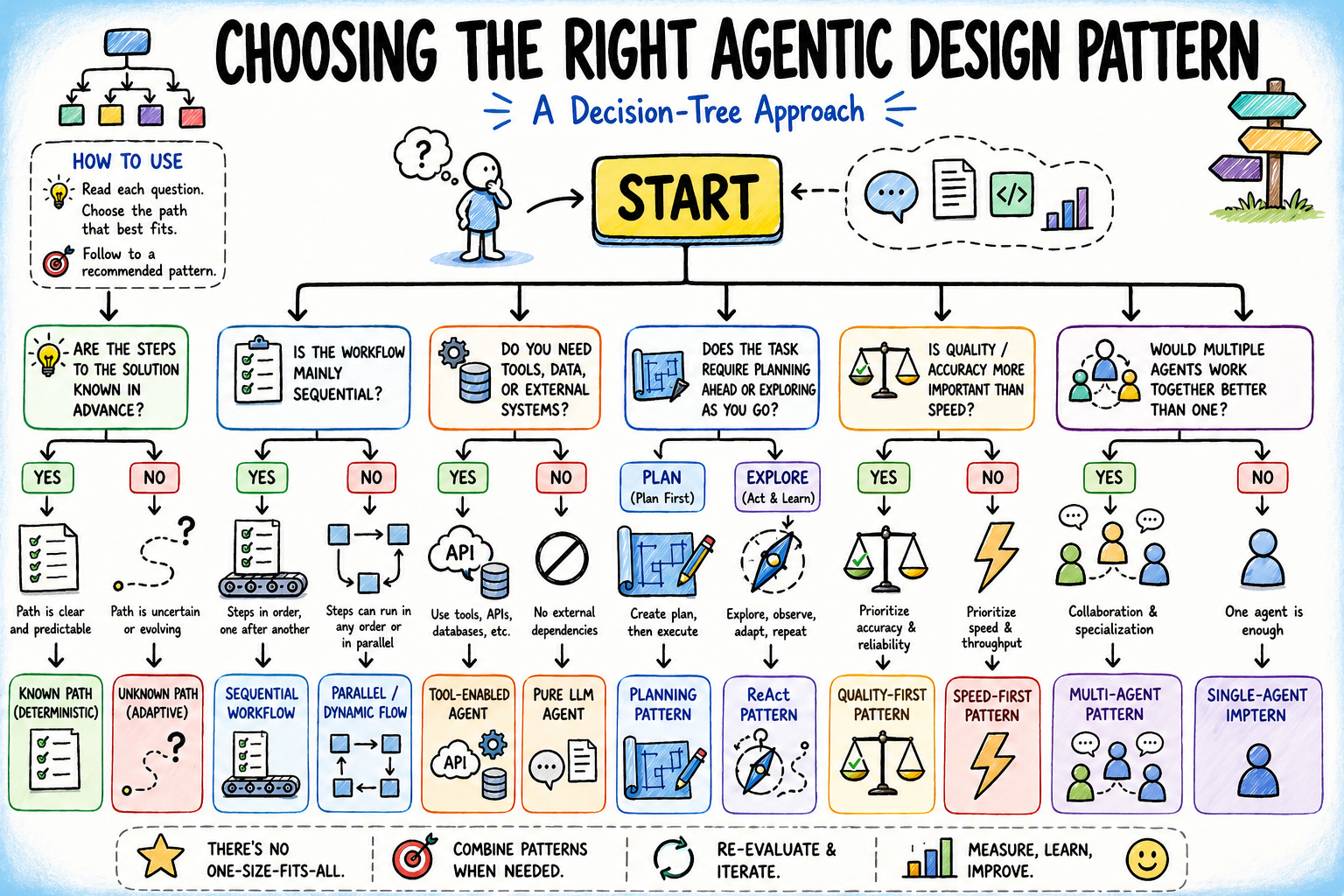

For known, stable paths, use a sequential workflow pattern. The agent follows explicit steps in order, passing outputs from one stage to the next until completion.

Sequential workflow pattern

The key design choice is where reasoning is needed. Use the model only for tasks like interpretation or generation, while deterministic code handles everything else. This keeps systems fast, predictable, and cost-efficient.

The main failure mode is over-engineering — adding ReAct-style reasoning where every step is already defined. If the process is fully deterministic, the agent should execute, not decide.

If the workflow starts breaking on edge cases or requires new steps not originally defined, it may be time to move to Question 2b.

Question 2b: Does the Task Require Tool Access or External Information?

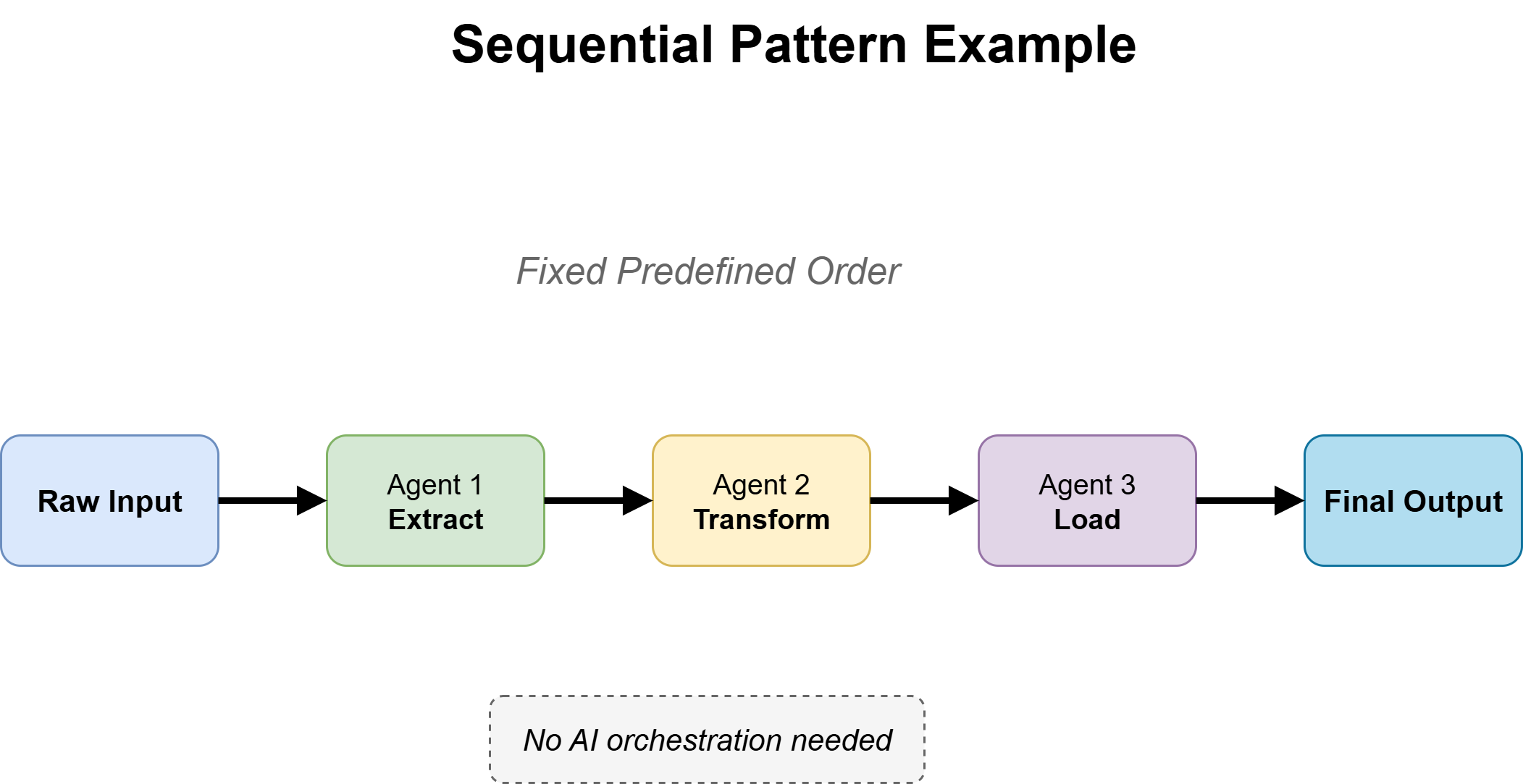

For tasks with unknown solution paths, the next question is whether the agent needs to interact with the external world — query databases, call APIs, retrieve documents, run code — or whether it can operate purely on information already in its context.

Tool use pattern

The answer is almost always yes: tool use is required. An agent that can only reason over its training data and the conversation context handles a narrow slice of real-world tasks. The moment the task involves current information, external state, or system-level actions, tool use becomes the foundation everything else sits on.

Effective tool design with clear contracts, inputs, and outputs matters, but for pattern selection the main point is simpler: tools add capability without changing the reasoning pattern. A ReAct agent with tools is still ReAct, and a planning agent with tools is still planning. Tool use sits under the reasoning layer, not alongside it.

Move forward to Question 3 with tool use assumed unless the task is genuinely self-contained.

Question 3: Is the Task Structure Articulable Before Execution Begins?

This question separates Planning from ReAct and is often skipped in practice, with developers defaulting to ReAct. ReAct works by iteratively alternating between reasoning steps and tool actions, using the results of each step to decide what to do next until a stopping condition is met.

ReAct Pattern

A task is structurally articulable when it can be broken into ordered subtasks with clear dependencies before execution. The full details may be unknown, but the main stages and sequence are clear. For example, building a feature (design → implement → test), provisioning systems in order, or producing a research report (gather → synthesize → write) all have a defined structure.

The planning pattern works well when this structure exists, because it exposes dependencies early and avoids mid-execution surprises. Without it, agents can only discover mistakes after spending time and compute on the wrong path.

Planning Pattern

But planning also has costs: an extra upfront step, reliance on the quality of the initial plan, and reduced flexibility when real-world conditions differ from expectations. When structure only becomes clear through interaction and feedback, planning can be misleading.

If the task is structurally clear → use Planning with ReAct inside steps. If structure emerges during execution → use ReAct and move to Question 4.

Question 4: Does Output Quality Matter More Than Response Speed?

This question introduces the reflection pattern — the generate–critique–refine cycle — and determines whether it should be added on top of the chosen pattern.

Reflection Pattern

Reflection is useful when two conditions are met:

- First, there are clear quality criteria the output can be checked against — such as a valid SQL query, a well-reasoned argument, or a contract with missing elements.

- Second, the cost of errors is high enough to justify an extra pass, such as deployed code or client-facing documents.

It is not useful when these conditions don’t hold. Without clear evaluation criteria, the critic produces vague or misleading feedback. And when speed is important — as in live systems or high-throughput tasks — the extra latency is a drawback.

A key detail is critic independence. If the critic mirrors the generator too closely, it tends to agree rather than evaluate. Strong reflection often requires a separate framing or even a different model.

If quality is important and criteria are clear → add Reflection. If speed matters more or evaluation is unclear → skip it and move to Question 5.

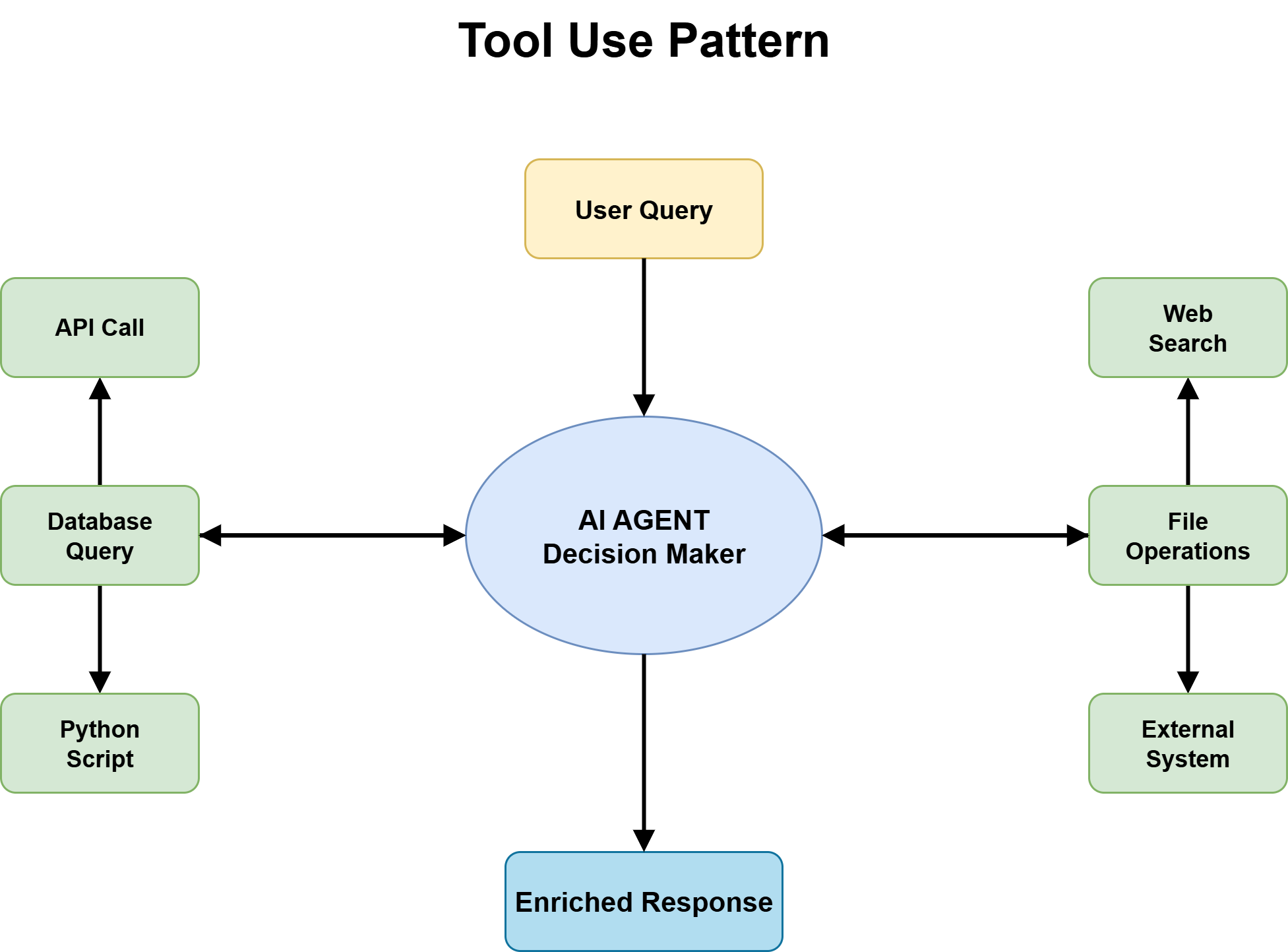

Question 5: Does the Task Have a Specialization or Scale Problem That One Agent Can’t Handle?

This is where you decide whether you need a multi-agent architecture, and it should only be considered after the previous steps have been evaluated carefully.

Multi-Agent Pattern

Multi-agent systems are useful when tasks are too large for a single context window, require different kinds of expertise across stages, or benefit from parallel execution to reduce overall time. They can improve performance by splitting work across specialized agents, but they also add coordination overhead, shared state complexity, and more failure points.

The specialization issue appears when different parts of the task need clearly different reasoning styles — such as legal review vs. financial modeling, or coding vs. security auditing. The scale issue appears when work cannot fit into one agent’s context or is being unnecessarily serialized.

If neither applies, a single strong agent is usually enough; the overhead of multiple agents outweighs the benefit. The trigger for multi-agent use should be a clear bottleneck that specialization or scale actually solves, not architectural preference.

When needed, key design choices include task ownership, routing logic, and topology, which can be sequential, parallel, or debate-style coordination.

Putting it all together, we have the following decision tree:

Decision Tree for Choosing the Right Agentic Design Pattern

Decision Tree → Agentic Design Pattern Mapping

Working through all five questions produces one of four common destination patterns. In practice, most production agents end up somewhere between these, with elements of multiple patterns layered together. These are starting points, not final states.

| Resulting Agent Pattern | When to Use | Why It Works |

|---|---|---|

| Single Agent + Tools + ReAct | Unknown solution path, no clear upfront structure, no strict quality constraints, no specialization needs. | Best default for real-world tasks. Flexible exploration via tool use and step-by-step reasoning. Inexpensive failure detection and iterative improvement. |

| Planning Agent + ReAct Execution | Task structure is knowable upfront, but each step requires adaptive reasoning during execution. | Planner defines stages and dependencies; ReAct handles local uncertainty. Reduces mid-execution failure from hidden complexity. |

| Single Agent + Reflection | High-quality output required and latency is acceptable. | Generate → Critique → Refine loop improves correctness. Works best when evaluation criteria are explicit and verifiable. |

| Multi-Agent Specialist System | Strong specialization needs or scale exceeds single-agent capacity. | Coordinator routes tasks to specialists. Enables parallelism and domain expertise, but adds coordination overhead and system complexity. |

Common Agentic Design Pattern Pitfalls (and Fixes)

Choosing a starting pattern from the decision tree is step one. Knowing how to diagnose when the chosen pattern isn’t working depends on reading a few clear signals.

| Signal | What It Means | Suggested Fix |

|---|---|---|

| ReAct looping excessively | Too many steps or revisiting resolved questions; agent is uncertain about progress or structure. | Task likely needs planning, better tool structure, or a clearer stopping condition. |

| Planning agent abandoning plan | Plan is created but not followed; execution keeps diverging from it. | Task is less structured than assumed; switch to lightweight planning + ReAct. |

| Reflection not improving output | Critique cycles don’t meaningfully improve output quality. | Evaluation criteria are unclear or the critic is too aligned with the generator; refine the critique setup. |

| Multi-agent routing failures | Wrong specialist selection or outputs don’t combine well downstream. | Routing logic issue; use deterministic rules for predictable cases instead of LLM routing. |

Next Steps

The decision tree makes agentic pattern selection explicit instead of instinctive. It forces the key questions — solution path, structure, quality needs, and specialization — before any code is written, when mistakes are least costly to fix.

Most issues come from over-engineering too early or staying too simple too long. The patterns themselves are stable; the difficulty is choosing correctly. Let the decision tree guide the starting point, and let practice and results guide the evolution.

For high-stakes applications, incorporate human-in-the-loop checkpoints where reliability, safety, or judgment calls matter most. Here are a few useful resources for further reading: