Why we built the Secure AI Factory

One year ago at NVIDIA GTC, Cisco and NVIDIA introduced the Cisco Secure AI Factory with NVIDIA. At the time, the challenge for enterprises was clear: AI was moving from science project to strategic priority, but the infrastructure and software were fragmented. Customers were struggling with the complexity of standing up massive compute clusters for training, optimization, and inferencing, while ensuring their data remained secure and private.

To solve this, the Secure AI Factory was built upon the foundation of Cisco AI PODs, our modular reference architecture based on Cisco Validated Designs and NVIDIA Enterprise Reference Architecture–compliant deployments. By integrating Cisco networking, compute, and partner storage with NVIDIA’s accelerated infrastructure, the full-stack AI POD allows enterprises to seamlessly deploy AI applications with enterprise-grade security fused into the fabric. Embedded into each layer of the reference architecture and wrapped around it is the Cisco portfolio of security and observability capabilities solutions. It provides businesses with a reliable, secure, hardened path to training, optimization, and inferencing within the core data center.

The rise of multi-agents everywhere

In the last twelve months, the landscape of enterprise AI has shifted. We have moved beyond simple generative AI chatbots and have now transitioned into the era of autonomous agents that drive business-critical functions. The major driver today is the need to run these multi-agent systems everywhere: in the core, in the cloud, and increasingly, at the edge.

However, this shift has revealed a massive hurdle: Security is currently the single biggest barrier to the enterprise adoption of AI agents.

Unlike a standard chatbot, an agent is autonomous; it has the power to invoke APIs, access data lakes, and make decisions, thus enabling teams to decrease time to value. These agents rely on large language models (LLMs) and small language models (SLMs) to provide reasoning context. If the model providing that context is attacked or goes down, the impact is catastrophic:

- Compromised operational decision making: If an agent managing warehouse logistics is hijacked through prompt injection, it could be manipulated to authorize incorrect shipping priorities or “ghost” inventory, leading to significant margin erosion and unfulfilled customer orders.

- Critical workflow stagnation: In a high-velocity environment, if an edge model goes down or its reasoning is corrupted, the automated process stops. For a warehouse, this means trucks aren’t loaded and the supply chain grinds to a halt, directly impacting the quarterly bottom line.

- Regulatory and compliance exposure: Agents operating at the edge often handle sensitive data. A security breach that leads to the exfiltration of personally identifiable information (PII) or proprietary predictive models can result in massive regulatory fines and a permanent loss of customer trust.

Extending Secure AI Factory across the core to the edge

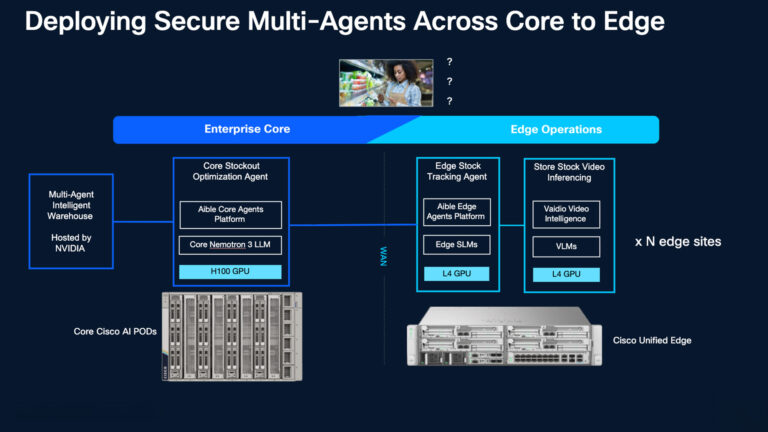

To meet these challenges, Cisco is announcing that it’s expanding Cisco Secure AI Factory from the data center to the edge, ensuring security follows the agent across the entire multi-agent spectrum.

With Cisco AI Defense, we provide a consistent security posture regardless of location. Whether an agent is running a trillion-parameter LLM in the data center or a specialized SLM in a remote warehouse, Cisco ensures the model is validated and the prompts are sanitized in real time.

A new reference architecture: Predicting stockouts for retail or manufacturing warehouses with Cisco Secure AI Factory with NVIDIA and its ISV ecosystem

While the Cisco AI POD provides a high-performance engine in the data center, a new challenge for some organizations lies on the warehouse floor. At GTC, we are showcasing how a warehousing team can leverage Cisco and partner architecture to find value through visibility: knowing the exact moment a stockout occurs or a safety hazard arises before it impacts the morning shift. For IT, the challenge is bridging the IT/OT gap by deploying and securing AI across hundreds of remote sites without creating a management nightmare. Extending visibility and operations from the core data center to the edge is about more than just hardware; it’s about getting the right intelligence to the right person, securely and at scale.

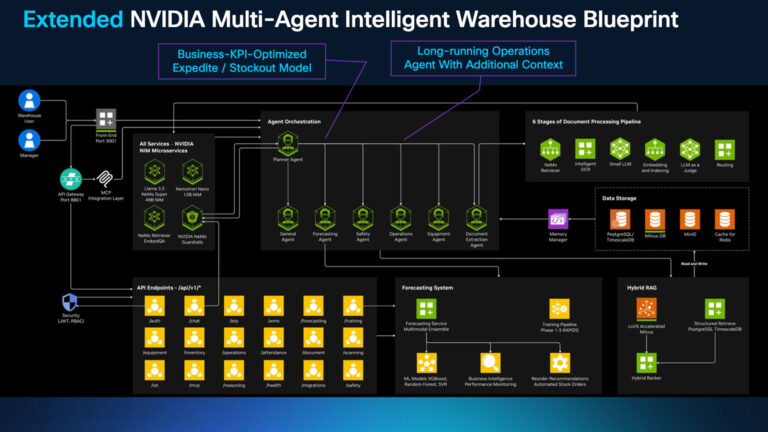

At GTC, we are demonstrating this expansion through a real-world solution extending the NVIDIA Multi-Agent Intelligent Warehouse blueprint. This demo showcases how specialized agents can bridge the gap between IT and OT layers.

How the solution components coordinate

The workflow begins at the edge, where Vaidio acts as the “eyes” of the warehouse, monitoring video feeds for events like pallet stockouts. When a stockout or low stock event is detected, Vaidio triggers a REST API call to an Aible agent running locally on Cisco Unified Edge. This agent uses an SLM to provide immediate reasoning context—deciding if a stockout is critical enough to disrupt a pending shipment. If action is required, the edge agent pings a core agent in the Cisco AI POD hosted in the data center, which queries the enterprise data lake and LLM to calculate the revenue impact.

For the warehouse manager, this closes the IT/OT gap entirely. Instead of manually cross-referencing spreadsheets or discovering empty shelves too late, the manager has a 24/7 digital assistant that identifies problems, calculates the business cost, and triggers an expedited order automatically, eliminating “ghost inventory” and keeping the supply chain moving.

The solution architecture

- Vaidio (the eyes): Running on Cisco Unified Edge nodes (3-4 nodes per site), Vaidio computer vision containers utilize NVIDIA L4 GPUs to monitor the floor for stockouts or safety hazards.

- Aible (the brain): An agentic platform orchestrating the workflow across the edge and core. When Vaidio detects an issue through a RESTful API, an Aible agent at the edge uses a local SLM (such as Nemotron-3 Nano or Llama 3.3) to provide immediate reasoning context.

- Cisco AI POD (the core): For heavy-duty predictive modeling, edge agents communicate back to the core AI POD (powered by NVIDIA GPUs). This core long-running agent powered by Aible understands how to balance the impact of stockouts against the cost of expedited shipping, queries the warehouse data lake to take the right decisions based on revenue impact and invoke shipping APIs.

- The deployment package: This solution runs Kubernetes 1.34 on Ubuntu 24.04 LTS with NVIDIA Driver 580.95.05 across both edge nodes and core AI POD infrastructure.

Cisco Intersight provides centralized AI server fleet management across core and edge, delivering infrastructure lifecycle control, policy consistency, configuration governance, and operational visibility across distributed AI environments.

This ensures the underlying compute platform remains consistent, secure, and scalable, while application and container orchestration operate within a validated, enterprise-ready infrastructure foundation.

Cisco AI Defense and comprehensive security for AI agents and applications

There’s a new reality in the enterprise: multi-agent systems. And Cisco is the only vendor that embeds security into the fabric of the AI Factory, protecting everything from the infrastructure to the agents that live on it.

Cisco AI Defense provides a dual-layer shield from the data center to the edge:

- Model integrity and scanning: AI Defense scans the LLMs and SLMs through their exposed APIs/endpoints. This identifies potential vulnerabilities in model files and identifies the “AI Bill of Materials” (AI-BOM) to ensure supply chain integrity.

- Runtime guardrails: As agents communicate, AI Defense applies real-time policies to sanitize prompts and responses. It detects prompt injections, prevents the generation of toxic content, and ensures that sensitive data—including PII, PHI, and PCI—never leaves the secure environment.

- Platform security: Cisco Unified Edge adds a physical and firmware layer of protection, including firmware roots of trust, locking bezels with intrusion detection, and Intel TDX/SGX for confidential computing.

The Aible distributed agent solution highlighted above benefits from all three layers of security. It runs securely on Cisco Unified Edge and Cisco AI POD servers. The models used by it at the core and the edge are scanned by AI Defense. All language model interactions of the agents at the core and edge use the runtime guardrails by default.

The future of multi-agents everywhere

The move to multi-agent AI represents the next industrial revolution of intelligence. By solving the challenges of edge deployment and model security, Cisco is helping customers move past the experimental phase and into real-world production.

By bringing the NVIDIA Multi-Agent Intelligent Warehouse blueprint to life on Cisco architecture, we are finally delivering the “chocolate” to both sides of the business. The Warehouse Manager gains a 24/7 digital coordinator through Vaidio and Aible that automates critical workflows and eliminates “ghost inventory.” Simultaneously, the IT employee gains a secure, “drift-free” platform managed with Cisco Intersight and protected by AI Defense. This isn’t just a technical achievement; it’s a production-ready solution that turns AI potential into a competitive advantage for the entire enterprise.

Stay tuned for our upcoming technical white paper, which will provide a deep dive into the architecture of the Secure Multi-Agent AI Factory.

Ready to accelerate your time to value? Contact your Cisco account representative today to learn how to extend your secure AI strategy from the core to the edge or click here to learn more about the Cisco Secure AI Factory with NVIDIA.